Dedicated to thoughts about software testing, QA, and other software quality related practices. I will also address software requirements, tools, standards, processes, and other essential aspects of the software quality equation.

Thursday, December 16, 2010

3 Ways to Stretch Your 2010 Training Dollars

Tuesday, September 14, 2010

New York City Voting Machine Problems - Implementation? We Don't Need no Stinking Implementation Plan!

$160 million spent and this was the result.

I like the "teachable moment" and this is one. It doesn't really matter how good the software if you don't have the hardware plugged in!

http://newyork.cbslocal.com/2010/09/14/new-yorkers-head-to-the-polls-on-primary-day/

Wednesday, September 01, 2010

Do You Test SaaS, Or Does SaaS Test You?

Imagine you are sitting at your desk one morning and your phone rings. It's not your boss - it's your boss's boss, the CIO. He's upset because the online sales database is down and the entire sales staff is paralyzed. "The sales software is broken!" he exclaims. Then he asks, "Didn't you test this?" You take a deep breath and say, "No, that software is a service we subscribe to. We have no way to know when it changes." The CIO isn't happy, but now sees the reality of Software as a Service (SaaS).

I believe that SaaS is not a trend, but a major force that will shape the future of IT and, therefore, software testing. In fact, SaaS could dramatically change the way we think about and perform software testing from here on.

This article has a definite angle toward the risks of SaaS. I fully embrace the benefits and appreciate them. I use SaaS, as you will see later in this article. My point here is to point out the risks. Only you can weigh them against your own benefits.

What's Different?

1. You have little or no control over the software, the releases, and any remedies for problems.

In some cases, you may get the choice of when to accept an upgrade. However, even when you are on a specific version of the application, the vendor may choose to make a small change without advance notice.

In other cases, you may come in to work and notice that things on the application look a little different. Or, you get the dreaded phone call that something is broken.

But here's the real rub: Even if you isolate the problem and report it, you are still at the vendor's mercy to fix the problem.

At least with Commercial Off-the-shelf (COTS) software, you get the choice when to deploy it. So at least you get the chance to test and evaluate it beforehand in a test environment.

2. You have almost zero knowledge of structure, and often no knowledge of new and changed functionality.

Forget white-box testing, unless it is for customized interfaces (for example, APIs). Now, let's say you have noticed some functional changes. What really changed? What stayed the same? What are the rules? When are they applied? Many times, none of this is published to the customers.

Not only is SaaS a black-box, it is more like many black boxes, all in a cloud. Your SaaS application is most likely comprised of many services, each with their own logic. In fact, some of the services may be supplied by a vendor you are not even aware of.

3. There is no lifecycle process for testing.

In the past, we could test at various levels - unit, integration, system, and UAT. That's all gone with SaaS. It's all UAT. Sure, you can test low-level functionality and integration, but it's from the customer, not the developer perspective. In the lifecycle view of testing, your tests can build on each other. In the UAT or customer view, testing is a "big bang" event. So, forget "test early and often." That changes to just "Test often."

4. Testing is post-deployment.

There may be some exceptions in the case of beta testing, perhaps. But for most people, you get to see the software only after it is deployed. That's too late to prevent problems. All you can do is race to find them quickly. In other words, you are in reaction mode instead of prevention mode. Then, even if you find the problems before your customers do, they may not be fixed for some time.

5. Test automation is fragile (and futile).

You get no return on investment because ROI is achieved when tests are repeated. When a new version is released, there's a good chance your automated testware will not work. This means you are at risk of reworking your test automation at every release. That said, some people may find test automation of basic functions a way to monitor when changes have been made.

6. Test planning is also futile.

This is because there is little detailed knowledge about the application in advance of using it. There is no specification basis for testing unless you write them. Use cases might be effective for describing work processes supported by the application.

Is There Any Hope?

Yes, and it's called validation. Not validation in the sense of "all forms of testing", but validation in the sense of making sure the software supports your needs.

In Point #6 I mentioned use cases. This is a form of test planning you can perform. You can create test scenarios based on work flows you perform in your organization. However, you must not think in terms of software behavior. Instead, you must describe work processes that can be tested no matter which software is being used.

You can create use cases to describe work processes, not software processes. These can be used as a basis for testing. The flows are perfect for mapping test scenarios.

You can assess the relative risk of work flows to prioritize your testing. You can even use pairwise testing to reduce the numbers of combinations of test scenarios and test conditions.

However, only in certain situations will this help you avoid problems: 1) You are a beta tester or 2) you get to choose when to apply an upgrade.

There Will be Pain

You arrive at work on Monday morning and discover one of your SaaS applications has changed. You and your team scramble to test the high-risk scenarios (manually) and discover that many things are broken. You call the vendor only to learn that your wait time on hold is 30 minutes. You file issue reports and just get the auto-responder messages. Days go by. You keep trying, your manager tries calling, but hey, you're just one customer out of thousands. In many cases, there is no "Plan B."

This is the extreme end of the risk scale. Not all releases fail, and when they do fail, not all fail to this degree. However, the risk is real.

My Story

Just last week I experienced a problem that would have been very painful had it happened at a time I was teaching an online class. My online training services provider upgraded to a new version of the web presentation platform, but gave no notice. All they indicated was that the site would be down for maintenance on Friday evening. Turns out, they introduced a totally new and upgraded platform with several improvements. However, several key things failed: 1) I could record a session, but never get the file (I wasted an hour recording a session to find this out!), 2) I could not upload a file for presentation, 3) I could not install the new screen sharing software on Windows XP. There's not much you can do with web meeting software behaving like this.

I called tech support on Saturday. Guess what? Nobody home, even after a major release. I filed 3 problem tickets which as of this date have still not been closed out, even though the problems were fixed about 2 days later. (I did get one response.) I'm happy things are working now, but troubled about the way the release and post-release were handled. If I had been scheduled to teach an online class on Monday, I would have been stressed out for sure.

My case is fairly low-impact, but it did show me the risks of SaaS. I encourage you to keep these risks on your radar because like it or not, SaaS is in your future.

I would like to hear your experiences and ideas for testing SaaS, so leave a comment!

Monday, August 30, 2010

Software Test Automation Workshop in Oklahoma City - Sept 14 and 15, 2010

I'm excited to announce we're holding the Practical Software Test Automation workshop in Oklahoma City on Tuesday, Sept. 14 and Wednesday, September 15, 2010.

The workshop will be held at the Hampton Inn, I-40 East (Tinker AFB) location at:

1833 Center Drive

Midwest City, Oklahoma, USA, 73110

1-405-732-5500

You can see the details (outline, pricing, etc.) and register at:

https://www.mysoftwaretesting.com/Practical_Software_Test_Automation_eLearning_p/pstaelearn.htm

GSA discounts are available for this workshop. Just contact me for details.

I hope to see you there!

Friday, August 13, 2010

U.S. To Train 3,000 Offshore IT Workers

Some key quotes:

"Despite President Obama's pledge to retain more hi-tech jobs in the U.S., a federal agency run by a hand-picked Obama appointee has launched a $36 million program to train workers, including 3,000 specialists in IT and related functions, in South Asia. Following their training, the tech workers will be placed with outsourcing vendors in the region that provide offshore IT and business services to American companies looking to take advantage of the Asian subcontinent's low labor costs.

Under director Rajiv Shah, the United States Agency for International Development will partner with private outsourcers in Sri Lanka to teach workers there advanced IT skills like Enterprise Java (Java EE) programming, as well as skills in business process outsourcing and call center support. USAID will also help the trainees brush up on their English language proficiency."

I don't know. I've stopped asking "why?" Back when outsourcing became all the rage we were told that in a new service economy people would rise to higher level positions. Instead, these US IT workers are trying to find the best position they can in an economy where there is a race to the bottom in terms of pay.

I know there are job training programs here in the USA, but even trying to find the front door to those programs is difficult. How often do you see those advertised? And now even those programs are being cut due to lack of funds. I guess we need to pay for other training programs for people in...Sri Lanka, Armenia and who knows where else.

I would love to hear your thoughts on this.

Sunday, August 01, 2010

The SaaS Performance Risk - An Example from Twitter.

Actually, there are many risks and concerns in the SaaS model. This is not to say the model does not have value, even great value. It's just that there are things you need to know before adopting SaaS.

The basic idea of SaaS is that you use software applications that are developed, hosted and maintained by a vendor. You trust that the processing will be correct, fast enough for you, secure, easy to use, available, reliable, etc. However, you have no control over those things. When the vendor has a problem, you have a problem. That can be a rude awakening to people.

To illustrate the performance risk, I saw an article recently about the overload at Twitter:

http://www.computerworld.com/s/article/9179446/Twitter_s_tech_problems_take_a_toll_on_developers?source=CTWNLE_nlt_app_2010-07-22

Twitter has a huge challenge with extreme traffic spikes. Now, on the upside, this is not a national security type of application, and most of us Twitter users have gotten used to the "Fail Whale" picture and just say, "Oh, well...I'll tweet that later."

Then, other things happen:

But there is a business impact to the companies that sell services interfaced to Twitter:

"'Twitter API issues in the previous weeks have been terrible for us," said Loic Le Meur, founder and CEO of Seesmic, which makes Twitter client applications for various desktop and mobile platforms. 'Users always blame Seesmic first since it's their primary interface to Twitter. It's extremely frustrating because there is nothing else we can do than warning users Twitter has problems. It is very damaging for us since users start to look for alternatives, which fortunately have the same problems, but damage to the brand is done,' he said via e-mail.'"

To be fair to Twitter, they've done a lot to improve reliability in recent years.

In a related story, from last week, I read on Bloomberg.com:

"Interest in the SaaS (software as a service) delivery model is growing to the point that by 2012, almost 85 percent of new vendors will be focused on SaaS services, according to new research from analyst firm IDC. Also by 2012, some two-thirds of new offerings from established vendors will be sold as SaaS, IDC said."

http://www.businessweek.com/idg/2010-07-26/idc-saas-momentum-skyrocketing.html

So, what does all this mean to you?

If you are considering SaaS as a major application delivery method, then be aware of the risks. In reality, there isn't a lot you can do during a SaaS failure. For example, you can't just call up the folks at Twitter and tell them to "get it fixed" (like perhaps you can speak with the developers in your own company).

Some of this goes back to service-level agreements (SLA), but no vendor I know of will guarantee 100% uptime. Even if they did, there are other risks, such as correctness, not typically covered in an SLA.

I wish I had a handy list of things to mitigate the risks, but every case is different.

I would be interested in hearing your experiences with SaaS and how you deal with the risks, so please leave a comment.

Friday, July 30, 2010

How to Create a Fear-Based Culture

Here is a great blog post from The Next Level Blog by Scott Elbin. Those who know me, know I'm big on the human factors in software development and testing. A big part of that is culture. One of Dr. Demming's 14 points is to "Drive out fear." What does that mean? Well, here's seven ways NOT to do it. See if you recognize any of them. Have a great day!

http://scotteblin.typepad.com/blog/2010/07/seven-simple-rules-to-create-a-fear-based-culture.html

Wednesday, July 21, 2010

Practical Software Test Automation Course Now Available in eLearning!

I am excited to announce the release of my newest course, Practical Software Test Automation, in e-learning format.

This course focuses on the basics of software test automation and expands on those topics to learn some of the deeper issues of test automation. This course is not specific to any particular tool set but does include hands-on exercises using free and inexpensive test tools. The tool used for test automation exercises is Macro Scheduler.

The main objective of this course is to help you understand the landscape of software test automation and how to make test automation a reality in your organization. You will learn the top challenges of test automation and which approaches are the best ones for your situation, how to establish your own test automation organization, and how to design software with test automation in mind. You will also learn many of the lessons of test automation by performing exercises using sample test automation tools on sample applications.

I hope to see you there!

Click here to see the course outline.

Monday, July 19, 2010

Wahington Post Series on Top Secret Sites is Shameful

Normally I don't get political on this blog, and actually, I don't really think this post is political. But I do think the topic is important.

Today, the Washington Post unveiled their series on the Top Secret work done by the Federal Government.

http://projects.washingtonpost.com/top-secret-america/companies/1/

Here's my problem: There will be a lot of innocent people placed at risk simply because of who they work for. Imagine this scenario: Joe Smith, an employee (fictitious) of a Top Secret government contractor takes a trip to a quasi-friendly (or even unfriendly) country to perform work for another client. Joe winds up in a situation for some reason that involves police authorities in said country. They ask him where he works. He answers truthfully. They run that information through their systems and bingo, get a hit. (They know all the companies now because of this article) Depending on the person running the query, Joe might be flagged as an agent. He certainly has knowledge of Top Secret information. Right?

Well...maybe, maybe not. However, try convincing an authority in a foreign country of that.

In fact, people don't even have to travel abroad. Now, our enemies know exactly where the offices of these companies are. They now have all types of targets for espionage and for recruiting spies.

Some will say the articles have a noble purpose to expose government waste. Is that something we don't already know?

Some may also say, like in a Tom Clancy novel, if the Washington Post can find this information, our enemies already know it. Yes, but they've made it really easy to find - all in one place, hyperlinked, with maps and all.

It will be interesting to see if any of the same people who were outraged over the "outing" of Valerie Plame will be outraged over this. I doubt it.

I think the Washington Post has abused the liberty of freedom of the press by publishing this series, but the damage has already been done.

Wednesday, July 14, 2010

New Testing Skills Needed for Companies that are Rebuilding Test Teams

It's still a tough economy, but some companies are starting to hire in IT again, even hiring software testers. That's an encouraging sign!

I've seen many companies struggle in the team rebuilding process, mainly due to simply getting people on the same basis of knowledge. The thing to consider is, when you start to being new people into your teams, how will they build the skills they need to be effective on your team?

Here is what often happens. After a company starts to feel the pain of tasks left undone (for testing, that means defects going straight to customers and customers leaving), they start to rebuild the teams. So, you search for the best and brightest people, and hire who you can afford. These people have a mixed bag of skills and talents, all learned from various sources, some practices effective, some ineffective. And then, some people embellish their skills on the resume, so when they are hired they don't perform as expected. But, you still stick with them at least for awhile.

Then, you have the faithful and the tough - the people who have been with the company for a long time and have never been formally trained.

If this situation is left "as is" you basically have a stew that is not very tasty.

What's the Solution?

1. Perform a Skills Assessment. This will tell you exactly where each person stands in their overall skill set.

2. Perform Training. This lays in place a foundation of common skills and terminology.

3. Perform Continued Mentoring. This reinforces the skills and may be needed for topics that the training can't reach, such as organization procedures, etc.

The sooner you can do this, the better, as long as you have most of the team in place. For the ones that join after the training, it's good to have e-learning available.

If you need help in getting your team's skills in place, contact me. I have over 60 courses in software testing and related topics. Tester certification is also a good approach for many teams.

I can create a custom training plan for your organization that will give you a head-start and boost your effectiveness as a team.

Tuesday, June 29, 2010

Is Software QA Dead?

Is traditional Software Quality Assurance (SQA) dead? Or...does it just suffer from poor perception and bad practice? I hope it is the latter.

I started thinking about this question after hearing a presentation recently that highlighted the problems with traditional SQA. The more I think about it, the more I believe we still need true SQA (not just testing). If you don't know the difference, please read on.

QA and QC

First, we must understand that true QA is not testing and it is not a verb. So, to say “then we QA it.” is like saying “then we configuration management it.”

SQA focuses on how a process is performed and is the management of quality. SQA can encompass metrics, process definition and improvement, testing, lifecycle definition, and so forth. The SQA function may perform some tests and reviews (quality control or “QC”) but unless there is quality management, the effort can easily become haphazard and uncontrolled.

Software testing is QC. So are reviews and inspections. The key difference is that the focus is on the product to find any defects. SQA and QC must work hand-in-hand to be effective.

SQA is process assurance, that is, assurance that the process is being performed as designed. QC is product assurance, or assurance that the product meets specifications. So, both activities are needed.

New vs. Old

I've been developing and testing information systems for over 33 years now. There's always something new that people think will change the way everyone develops software. Think about all the approaches and methods that have come down the pike – from waterfall to agile. Why do people still use the waterfall? Why are some people ardent evangelists of agile?

I think much is explained by comfort zones and culture. People tend to use approaches they are comfortable with. People don't like to change.

However, people do like to be fashionable. New approaches are fashionable which gives them early adopters who become enthusiastic supporters. After all, when was the last time you saw someone excited about the waterfall approach?

Process-orientation vs. Product-orientation

Back in the 70's and 80's, one of the big issues was that much focus was on the software, not on how it was built. So, the famous quote was “If builders built buildings the same way programmers write code, the first woodpecker that came along would destroy civilization.”

In 1985, I was performing eXtreme Programming, it just wasn't called that. I worked in tandem with another programmer, developed my tests first, then coded to them, and worked from user stories. The problem was inconsistency. We were the only two working this way. There was no one in management that wanted to spread the technique. In those days, like today, waterfall was king. Why? Because the waterfall model can be explained in about 10 minutes or less.

Software project consultants looked at this state of affairs and concluded that the process has a great deal to do with creating software and that software development should be an engineering effort, not an art or a craft. Hence, the title, “software engineer” and following years of creating process models, such as the Capability Maturity Model (CMM). The CMM was very process-centric. In fact, the original version didn't have a key process area for software testing. The idea was that if the process was performed correctly, testing would not be needed.

The flaw in the process-only idea is that people are not perfect. Therefore, there will be mistakes at every step in building software, all the way from concept through system retirement. The only way to find the defects caused by these mistakes is to detect them by an effort designed to find them. A filtering approach where defects are screened out by inspections along with early and ongoing testing is a very effective approach.

The good part of the process focus is that it does typically deliver a better product with fewer defects injected throughout the project life cycle. This has been proven by organizations with high levels of process maturity who also measure defect detection percentage. (See chart.)

Indeed, processes provide a valuable framework to organize and perform all other project tasks. In short, processes can be improved, they can be shared and they can be trained.

SQA is a key mechanism by which improvements are made. In organizations it is common to find pockets of both good and bad practice. Improvement is rare, which caused people to see the need for processes to begin with. A few years back, Lewis Gray wrote a great article for Crosstalk Journal entitled, "No Hypoxic Heroes, Please", in which he makes a compelling case for software processes using the example of why mountain climbers follow processes and standards – which is to keep from making bad decisions when their minds start to become oxygen-deprived and the ability to reason is impaired. We see the same thing on software projects, especially as the deadline looms closer and closer.

Bad SQA Practice

It is possible to take any effective tool or approach and apply it in an ineffective way. Some organizations have built the SQA function into a bureaucracy which slows projects down and adds little value to the organization. In fact, defects may even increase due to people spending so much time performing paperwork. This was never the objective of SQA, “old school” or in any context.

Other organizations have turned SQA into a police force which investigates, audits and regulates software projects. The intent is noble, but the rest of the project lives in fear of the SQA team and what they can do to them.

In some organizations, SQA is a gatekeeper. To get software into production use, it must get QA approval. Once again, this is a negative view of what SQA is intended to perform. In reality, implementation should be a team-based decision based on risk.

The average lifespan for a SQA group is about two years. That's because after about two years, senior management asks, “What do these people do?” Unless the SQA team can show tangible and positive value, the decision is likely to be made to try something else to improve software quality. All too often, the SQA team's work is seen as intangible and paper-shuffling.

Back in 2000 I gave a keynote presentation at QAI's testing conference in which I made a major point that if QA and test organizations must be in alignment with business objectives and project objectives, or else they will be marginalized and most likely eliminated. In other words, the QA and test teams must find the right balance of finding and preventing problems, as compared to not stopping progress.

I believe the only people who can direct that balance are the business stakeholders. These are the people who live with the level of software quality, or at least know what they want the business's customers to experience.

What Can Really Be “Assured”?

Not much, in my experience. We can't guarantee perfection since we can't test everything. Likewise, we can't assure a process has been perfectly performed. Even if a process is perfectly performed, the process itself can be flawed.

This leads me to my final point which concerns processes as performed professionally versus those performed in a factory setting. In a factory, you want everyone doing the same thing in the same way. You do not want any variation at all.

However, the more professional the effort and the person, the more you can rely on their expertise to do the job expertly. There are many examples of this, such as great chefs knowing how to prepare great meals without a recipe, or great doctors not reading the process book as they perform surgery. However, what is not seen, are the rules, standards and protocols each of these professionals adhere to. For example, the chef cooks food to pre-established proper temperatures to prevent food poisoning. The surgeon has a team of people following an exact checklist to make sure all preparation is correct before the surgery and all surgical instruments are accounted for after the surgery.

In software development we rely on many people to get it right. All the way from concept to delivery, developers, business analysts, architects, testers, DBAs, trainers, management, customers and others must work together in professional ways. There may or may not be a formal software life cycle followed.

When things work well, no one seems to think much about software QA or testing. It's like the air conditioning - no one gives it a thought until it breaks down. However, when the product being delivered starts to slip and the customers start to leave, then management starts thinking “Maybe we need some structure in place to make sure we do the right things in the right ways.”

Smart QA

QA done well and done smartly, can be a very helpful activity. The problem is when people don't match the QA approach to the business and project context.

Instead of doing some basic root cause analysis and finding the true source of the problems, which can be addressed and prevented, some companies embark on major pushes to install a new QA program, SDLC or both.

Instead, how about some simple steps such as basic processes, checklists, guidelines and re-designed tests? Then, after seeing how those work, we can make further adjustments.

Conclusion

I don't think true QA (not testing) is dead, but I do think it suffers from bad practice and poor perception. I also believe there is a pendulum effect which swings from one extreme to another. In the case of software, the pendulum swings from “no process” to “all process”. Right now, we are at the “no process” end of the swing, with movement toward the center.

QA is a function that each organization must decide how best to adopt. Some will reject QA entirely, some will adopt it smartly and some will adopt it inconsistently or with bureaucratic approaches.

I hope you apply QA smartly. If you need help in doing that, call me!

Wednesday, June 09, 2010

Software Test Automation Workshop in the Dallas/Ft. Worth Area - Aug 12 and 13, 2010

I'm excited to announce I'm coming to the Dallas/Ft. Worth area in August to present my newest course - Practical Software Test Automation workshop!

Here's the details and how to register:

http://riceconsulting.com/home/index.php/Public-Seminars/randy-rice-to-present-test-automation-workshop-in-dallas-ft-worth-area-aug-12-and-13-2010.html

http://www.mysoftwaretesting.com/product_p/dfwauto.htm

I hope to see you there!

Want a class like this in your city or at your company? Let me know!

Thanks,

Randy

Friday, June 04, 2010

20 Year Anniversary of Rice Consulting Services Today

It was 20 years ago today...Sgt. Peppers taught his band to play....I mean we moved from Kansas City back to our home of Oklahoma City to start Rice Consulting Services. My first project was the Oklahoma City Water Trust, which was a major failure. I call it "the day I tested myself out of a job" because I asked whether or not the system had been stress tested. Turns out, it hadn't and it could not stand any load. The system never saw the light of day. You can read all about it here. It's worth your time.

Then, I got to know people like Bill Perry who gave me a great national platform with the opportunity to speak at the QAI testing conferences. We wrote together, Surviving the Top Ten Challenges of Software Testing, which opened many other doors.

Thanks to all of my friends and clients, who have supported us in the good and bad times.

Thanks to Janet, my wife and President of RCS, who is 100% behind this business and understands what small business is like. We eat what we shoot. We take risks that would make some people sleepless. There are no guarantees or bailouts for small businesses. We are small enough to fail, but by the grace of God, we keep going and serving our clients.

I feel like one the farmers who won the lottery a few years back. When asked what they were going to do with the money, one of them said, "We're gonna keep farmin' til the money runs out."

So, I raise my cup of coffee to you, my friends that read this blog. Thanks for your support. I look forward to 20 more years. We will probably still be talking about how to write test plans. And, that is...well, depressing in a way, but shows the never-ending job of skill building in testing.

Thanks, everyone!

Thursday, June 03, 2010

Agile and Exploratory Testing in Kansas City - July 13 and 14, 2010

Here's the details and how to register:

http://riceconsulting.com/home/index.php/Public-Seminars/randy-rice-to-present-agile-exploratory-workshop-in-kansas-city-area-july-13-and-14-2010.html

About the course: http://riceconsulting.com/home/index.php/Table/Agile-Testing/

There's a special discount for KCQAA members. I hope to see you there!

Want a class like this in your city? Let me know!

Thanks,

Randy

Friday, May 28, 2010

The Surprising Truth About What Motivates Us

http://michaelhyatt.com/2010/05/the-surprising-truth-about-what-motivates-us.html

Have a great and safe weekend...and remember those who have served and died for our country!

Randy

Thursday, May 27, 2010

Webinar Links - Ellusive Tester to Developer Ratio

Thanks for tuning in to the webcast today.

Here are the links for the recorded session and chat transcript, along with other things I mentioned.

Recorded webinar

Chat transcript

Slides in PDF format

CTE-XL tool

Article - Ellusive Tester to Developer Ratio (The one I wrote back in 2000)

Thanks!

Randy

Sunday, May 23, 2010

May 2010 Newsletter Posted

Better late than never! The May issue of the Software Quality Advisor Newsletter is out:

http://riceconsulting.com/home/index.php/Newsletter-Past-Issues/may-2010-test-estimation-based-on-testware.html

You can get your copy each month to your e-mail account by signing up at:

http://riceconsulting.com/home/index.php/Newsletter/the-software-quality-advisor-newsletter-sign-up.html

Friday, May 21, 2010

Webinar - Thursday, May 27 - The Elusive Tester to Developer Ratio

To join the meeting, visit https://my.dimdim.com/ricecon on May 27 at 11:50 a.m., CDT. The webinar starts at 12:00 noon.

Wednesday, May 19, 2010

Book Review - Reflections on Management by Watts S. Humphrey with William R. Thomas

The Capability Maturity Model (CMM) and Capability Maturity Model Integrated (CMMI) have been major forces in software development for at least 20 years. Along with those, the Personal Software Process (PSP) and the Team Software Process (TSP) have also been applied to help make software projects more predictable and manageable.

This book is a collection of essays and articles written by Watts Humphrey, the man who was the influence and drive behind these models and processes. I found this book to be an interesting journey through the thinking of Humphrey as he clearly and rationally outlines the "why" behind the "what." Then, he describes "how" to do the work of managing intellectual and creative people which have to work together to deliver a technical product - on time, within budget, with the right features and with quality.

There are many gems in this very readable book (a great airplane book), such as:

- Defects are Not Bugs

- The Hardest Time to Make a Plan is When You Need it Most

- Everyone Loses With Incompetent Planning

- Every New Idea Starts as a Minority of One

- Projects Get into Trouble at the Very Beginning

- Managing Your Projects

- Managing Your Teams

- Managing Your Boss

- Managing Yourself

Although this book is a collection of essays, it flows very well and reads like it was written as one book. By the way, I felt the Epilogue was excellent - don't skip it.

If there are any doubts about the credibility factor of this book, the advance praise at the front of the book spans four pages and reads like a "who's who" of software development: Steve McConnell, Ed Yourdon, Ron Jeffries, Walker Royce, Capers Jones, Victor Basili, Lawrence Putnam and Bill Curtis, to name a few.

Whether you are fully immersed in the agile project world, or following the CMMI, or just trying to figure out the best way to plan, conduct and manage software projects, this is a book worth reading and taking to heart. In the advance praise, Ron Jeffries (www.XProgramming.com) writes, "I've followed Watts Humphrey's work for as long as I can remember. I recall, in my youth, thinking he was asking too much. Now that I'm suddenly about his age, I realize how many things he has gotten right. This collection from his most important writings should bring these ideas to the attention of a new audience: I urge them to listen better than I did."

Amen, Ron, amen.

Reviewed by Randy Rice

Disclosure of Material Connection: I received one or more of the products or services mentioned above for free in the hope that I would mention it on my blog. Regardless, I only recommend products or services I use personally and believe will be good for my readers. Some of the links in the post above are “affiliate links.” This means if you click on the link and purchase the item, I will receive an affiliate commission. Regardless, I only recommend products or services I use personally and believe will add value to my readers. I am disclosing this in accordance with the Federal Trade Commission’s 16 CFR, Part 255: “Guides Concerning the Use of Endorsements and Testimonials in Advertising.”

Test Estimation Based on Testware

However, there is a technique I have used over the years that plays on risk-based approaches. This technique can be applied to testware, such as test cases. Just remember this is not a scientific model, just an estimation technique.

What is Testware?

Testware is anything used in software testing. It can include test cases, test scripts, test data and other items.

The Problems with Test Cases

Test cases are tricky to use for estimation because:

They can represent a wide variety of strength, complexity and risk

They may be inconsistently defined across an organization

Unless you are good at measurement, you don’t know how much time or effort to estimate for a certain type of test case.

You can’t make an early estimate because you lack essential knowledge – the number of test cases, the details of the test cases and the functionality the test cases will be testing.

Dealing with Variations

“If you’ve seen one test case, you’ve seen them all.” Wrong. My experience is that test cases vary widely. However, there may be similarity between some cases, such as when test cases are logically toggled and combined.

A technique I have used to deal with test case variation is to score each test case based on complexity and risk, which are two driving factors for effort and priority.

The complexity rating is for the test case, not the item being tested. While the item’s complexity is important in assessing risk, we want to focus on the relative effort of performing the test case. You can assign a number between 1 and 10 for the complexity of a test case. It may be helpful to create criteria for this purpose. Here is an example, You can modify it for your own purposes.

1 – Very simple

2 – Simple

3 – Simple with multiple conditions (3 or less)

4 – Moderate with simple set-up

5 – Moderate with moderate set-up

6 – Moderate with moderate set-up and 3 or more conditions

7 – Moderate with complex set-up or evaluation, 3 or more conditions

8 – Complex with simple set-up, 3 or more conditions

9 – Complex with moderate set-up, 5 or more conditions

10 – Complex with complex set-up or evaluation, 7 or more conditions

This assessment doesn’t consider how the test case is described or documented, which can have an impact on how easy or hard a test case is to perform.

Assessing Risk

Risk assessment is both art and science. For estimation, you can be subjective. In fact, my experience is that risk assessment is subjective at some point or other.

This scale is based on the risk (impact) of the test case and its priority in the test. Like the complexity ranking, here are sample criteria you can adapt for your own situation:

1 – Lowest priority, lowest impact

2 – Low priority, low impact

3 – Low priority, moderate impact

4 – Moderate priority, moderate impact

5 – Moderate priority, moderate impact, may find important defects

6 – Moderate priority, high impact, has found important defects in the past

7 – High priority, moderate impact, new test

8 – High priority, high impact, may find high-value defects

9 – High priority, high impact, has found high-value defects in the past

10 – Highest priority, highest impact, must perform

Actually, the risk level could be seen from two perspectives - the risk of the item or function you are testing, or the risk of the test case itself. For example, if you fail to perform a test case that in the past has found defects, that could be seen as important enough to include every time you test. Not testing it would be a significant risk. The low risk cases would be those you could leave out and not worry about. Of course, there is a tie-in between these two views. The high-risk functions tend to have high-risk test cases. You could take either view of test case risk and be in the neighborhood for this level of test estimation.

Charting the Test Cases

To visualize how this technique works, we will look at how this could be plotted on a scatter chart. There are four quadrants:

1 – Low complexity, low risk

2 – High complexity, low risk

3 – Low complexity, high risk

4 – High complexity, high risk

Each test case will fall in one of the quadrants. One problem with the quadrant approach is that any test case in the center area of the chart could be seen as borderline. For example, in Figure 1, TC004 is in quadrant 4, but is also close to the other areas as well. So, it could actually be in quadrant 1 if the criteria are a little off.

Figure 1

For this reason, you may choose instead to divide the chart into nine sections. This “tic-tac-toe” approach gives more granularity. If a test case falls in the center of the chart, it is clearly in section 5 (Figure 2), which can have its own set of test estimation factors.

Figure 2

All You Need is a Spreadsheet

With many test cases, you would never want to go to the trouble of charting them all. All you need to know is in which section of the chart a test case resides.

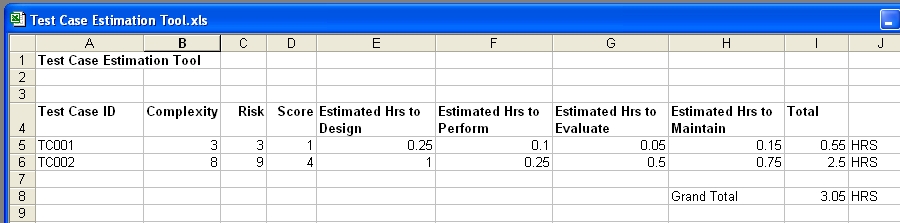

Once you know the complexity and risk scores, all you need to know are the sections on the chart. For example, if the complexity is 3 or less and the risk is 3 or less, the test case falls in section 1 of the nine-section chart. These rules can be written as formulas in a spreadsheet (Figure 3).

Figure 3

Sampling

So, what if you don’t have a good history of how long certain types of test cases take to perform? You can take samples from each sector of the chart.

Take a few test cases from each section, perform the test cases and measure how long it takes to set-up, perform and evaluate each test case.

You now extend your spreadsheet to include the average effort time for each test case (Figure 4).

Figure 4

Adjusting

Your estimate is probably inaccurate. There is a tendency to believe the more involved and defined the method is, the more accurate the estimate will be. However, the reality is that any method can be flawed. In fact, I have seen very elaborate estimation tools and methods which look impressive, but were inaccurate in practice.

It’s good to have some wiggle-room in an estimate as a reserve. Think of this factor as dial you can turn as your confidence in the estimate increases.

Conclusion

Like with any estimation technique, at the end of the day, there could be any number of things that could impact the accuracy of most estimates. Estimates based on test cases can be helpful once you have enough history of measuring them.

Sampling can be helpful if you have no past measurements, or if this is a new type of project for your organization. It is still a good idea to measure the actual test case performance times so you can incorporate them in your future estimates.

I hope this technique helps you and provides a springboard for your own estimation techniques.

Tuesday, May 18, 2010

New Test Automation Class a Success!

Thanks to everyone that participated in last week's presentation of my newest workshop, Practical Software Test Automation in Oklahoma City. The class went well, we had a great time together, and I learned some adjustments I need to make. Thanks to the Red Earth QA SIG for their sponsorship.

We also got some positive buzz from Marcus Tettmar, the maker of Macro Scheduler. Thanks, Marcus.

http://www.mjtnet.com/blog/2010/05/13/new-software-testing-course-featuring-macro-scheduler/

We use Macro Scheduler as the learning tool in the course for test automation and scripting. By the way, version 12 has just been released. I look forward to trying it!

http://www.mjtnet.com/blog/2010/05/17/macro-scheduler-12-is-shipping/

The next presentation will be next month in Rome. If you live in Italy, or have a desire to take a testing class in a great location, join me there on June 16 and 17. I will also be presenting the Innovative Software Testing Approaches workshop that week in Rome.

Innovative Software Testing Approaches

http://www.technologytransfer.eu/event/984/Innovative_Software_Testing_Approaches.html

Software Test Automation

http://www.technologytransfer.eu/event/985/Software_Test_Automation.html

If you are interesting in having this workshop in your city or company, just contact me!

Wednesday, May 12, 2010

The 900+ Point Drop in the Dow - A Real-Life Root Cause Analysis Challenge

The other day when the Dow dropped over 900 points, it was blamed on some trader someplace entering an order to sell "billions" instead of "millions" of P&G stock. To date, they still cannot produce the trade or the trader. Something doesn't smell right.

1. I would think that level of trade would require some sort of secondary approval.

2. Isn't there an audit trail of trades that would lead back to the trader?

3. If a billion dollar trade could do this, shouldn't there be an edit or at least warning message, "You have entered an amount in the billions. Click OK to bring down the entire global financial system."

This makes me question if this was really the case. Other possibilities could be:

1. A run on stocks that was truly panic selling and this was a way to explain it away without spooking everyone else in the country. I guess this is the "conspiracy theory" view.

2. A software defect...and maybe not a simple one. This could be one of those deeply-embedded ones. I know a little about how the Wall St. systems and processes work and believe me, this is not beyond the realm of possibility.

It may be impossible to know for certain. One would have to perform a deep dive root cause analysis, go through the change logs (if they exist), look at the exact version of code for everything going on at that time, look at a highly dynamic data stream...you get the idea. That probably won't happen. If someone does manage to isolate this as a software defect and can show it, I nominate them for the Root Cause Analysis Hall of Fame, located in Scranton, Pa. (Don't go looking for that...but there is a Tow Truck Museum in Chattanooga, TN.)

Just a random thought...

Tuesday, May 04, 2010

Great Customer Service in Action at Blue Bean Coffee

However, people know great service when they see it.

This morning I was having coffee with my pastor at Blue Bean coffee here in south Oklahoma City (SW 134 and Western). All of a sudden, the lady behind the counter ran out the door with a can of whipped cream in her hand. Turned out she had forgotten to top off the customer's drink. She said, "That would have bothered me all day."

Wow. That made an impression. Really, it's not that big a deal, but in today's "lack of service" culture it stands out.

Compare that to my experience a couple of weeks ago at Starbucks, not too far away. I go in with my own mug and ask for the free cup of coffee they were promoting for Earth Day. (This happened on Earth Day!) The barista looked confused and went in the back office to ask the manager. He came back out and said, "Sorry, that was last week." "OK," I said. "I guess I'll have a tall bold." To which he replied, "We only have a little left." "Is is fresh," I asked. "We change it every 30 minutes," he said. Now, you coffee drinkers out there know that a little coffee left on the heat for even a short time gets bitter, but I took my chances. Turned out, it was bad but I has already left. I complained to Starbucks online and got a good response back and two free drink coupons.

I was left wondering, though, how hard would it have been for the barista to simply say, "That promotion was last week, but here, have a cup anyway."? Perhaps he was not empowered to do that, or maybe he just wasn't thinking about the lifetime value of a customer.

Remember how Starbucks has tried all the other "ambiance" stuff - music, etc. I applaud the efforts at creating an experience, but to me the customer, I don't go to Starbucks for music. I go there for coffee and hope for decent service. Sometime they are friendly and sometimes they aren't. For the longest time all they served was Pike Place. Then, finally, someone in corporate woke up and decided to start offering different blends again.

All I can say is that I'll use those Starbucks coupons on the road and get my local coffee at Blue Bean. It's more personal, more friendly and they have better coffee. And that's what it's all about - the coffee and the service.

Keep that in mind as you serve people in IT. If you give great service and have a great product, people will find you irreplaceable and keep coming back for more. You will be more personal and more valuable.

Thursday, April 29, 2010

StarEast 2010

A Deeper Dive into Dashboards

PowerPoint slides of the presentation (PDF)

Excel dashboard with macros

Excel dashboard without macros

The Xcelsius dashboard example

The full 16 minute video of how I created the dashboard

(The reason I give both versions is because the macro-enabled one will update if you are connected to the Internet.)

Here are a couple of the websites I mentioned:

www.dashboardspy.com

www.datapigtechnologies.com

The Elusive Tester to Developer Ratio

We were discussing how to leverage high ratio situations, like 1 tester to 10 developers and higher. The question was asked about how to get testers more on-board with doing unit testing. One suggestion I had was to provide with with a checklist. Here is a unit test workbench with two checklists. This is in Word format, so feel free to modify it to meet your needs.

By the way, I plan to have narrated versions of both presentations posted soon on my website.

Stay in Touch With Me!

There are several ways to stay in touch:

1) Sign up for my newsletter

2) Follow me on Twitter - @rricetester

3) Become a Facebook fan

I hold a monthly drawing for free books for my newsletter subscribers and Facebook fans. The next one is on May 1.

Thanks and have safe travels home!

Sunday, April 25, 2010

Oklahoma City Test Automation Training Workshop - May 13 and 14, 2010

This workshop is sponsored by the Red Earth QA SIG and hosted by Devon Energy. Thanks greatly to those organizations.

This workshop is not oriented to any specific tool, but the lessons and concepts are transferable to any tool. We will be using some free and inexpensive tools for the exercises, so bring your own computer.

Nervous about scripting? That's OK. You will be able to work at a pace that suits you and I along with others in the workshop will be there to help.

To learn more and to register, just visit http://www.mysoftwaretesting.com/product_p/okcauto.htm

If you would like for me to come to your company and present the workshop, just call me at 405-691-8075.

Thanks!

Randy

Friday, April 23, 2010

My Take on the McAfee Mess

On April 21, McAfee released an update to their anti-virus application that disabled systems running Microsoft XP SP3. This caused a bad day at McAfee, but even worse days at all the companies and homes that were impacted.

You can read the early account here:

http://www.computerworld.com/s/article/9175928/The_McAfee_update_mess_explained?source=toc

Then, today, a more detailed explanation and apology was issued:

http://www.computerworld.com/s/article/9175940/McAfee_apologizes_for_crippling_PCs_with_bad_update?source=CTWNLE_nlt_dailyam_2010-04-23

The bottom line was a critical defect was missed and made its way to customers. Here are my observations as an interested bystander and software testing consultant:

1) The apology was cryptic for a technical audience. "We recently made a change to our QA [quality assurance] environment that resulted in a faulty DAT making its way out of our test environment and onto customer systems." However, no explanation of the change was given. Was a platform removed, or skipped? Was a test case skipped? Was there a rush to get the update out? Why was the environment changed? The blame seems to be on the change to the environment.

2) The risk was very high. This is the tester's worst nightmare and an example of what you don't want your company to go through, or your customers. This is a credibility-basher. I work with some software companies that say "We don't care about the risk. If there are problems, we'll just post a hotfix on the web site." Right.....

3) Testing can't find all the defects. However, testing is an easy role to place blame. At least they didn't blame the testers - they blamed the process and the environment, which is probably the appropriate place to focus.

4) This points out a big risk for COTS applications - applying an update without testing it. I know the updating process is automated for large companies. However, one of the things I teach in my COTS testing class is to test the updates before rolling them out to the entire company. I suspect this will be one of those lessons learned for many people. The really troubling thing is for individuals who get impacted. They have no "test" PCs.

5) The customers want to hear from the CEO over this. So, your CEO doesn't seem to care about testing? This is a good case study to show why they should care.

6) Your test is only as good as your environment. You may have great tools, great testers and great processes, but if you have gaps in your environment, you don't know for sure what you are testing.

7) This is one of those head-bangers. Apparently, this was not one of those deeply-embedded defects, but one that could have been found just in a simple update to a commonly-used platform. This is one of those defects that leaves management and customers asking, "Why didn't you guys test that? (I refer you back to observation #1) They aren't saying for sure.

8) There will be more defects escape in the future. The only question is, what will the impact be? If you really want a scare, take this scenario out to medical devices, aircraft, automobiles, utilities and other safety-critical applications. No matter how hard we try, there will still be defects because we can't test everything. That's not an easy reality to embrace because many people have grown to trust that software just works - mostly. Testers know better

OK, enough of being the armchair quarterback. This is serious and frustrating, but not the end of the world. A few weeks from now, it will all be forgotten. Actually, that's part of the problem. We experience the pain, the pain goes away, then we experience it again...and again.

Your thoughts?

Thursday, April 22, 2010

Striving for Quality has Real Payoffs

http://www.computerworld.com/s/article/9175836/Striving_for_quality_has_real_payoffs?taxonomyId=14&pageNumber=1

The common misconception is that the 20th century quality gurus, like Deming, Juran and Crosby were out there teaching about quality for the sake of virtue. The reality is that they taught quality concepts as a way to build the bottom line in a company by gaining customers and serving existing customers better. That's why I get really annoyed at people who dismiss quality improvement as an optional thing. I agree that "quality" has been so overused is fails to resonate anymore with people, but so does "safety" until you get hurt or killed. The problem is not the concept but our thinking (or lack of attention).

Read this article and learn how to make the message to management. The great thing about this article was that management made the mandate to IT. And that is exactly the direction the quality mandate should flow.

Have a quality day!

Randy

Wednesday, April 21, 2010

Technology is Just the Tool

— Joseph Priestley: Was an 18th-century theologian and educator

Isn't it interesting that this was written in the 18th century? It's like the idea that instead of technology making our lives easier, it has done just the opposite. Technology is just the tool. You can use any tool relentlessly and still wear yourself out. You can use any tool in an ineffective way.

One of my favorite lines from a movie is when Pappy O'Daniel says in "Oh Brother, Where Art Thou?" as he and his entourage are walking into the little radio station, "We ain't one-at-a-timin' here. We're MASS communicating!"

Sure, technology has changed the means of communication. What it has not changed is how we really connect with someone.

Saturday, April 17, 2010

10 Reasons Why You Aren't Done Yet

http://michaelhyatt.com/2010/03/10-reasons-why-you-aren%E2%80%99t-done-yet.html

Friday, April 16, 2010

It's About Communication

I ran across an interesting article this morning at computerworld.com about how one CIO does this :

CIO says communication is key to buy-in

No matter which role you are in, communication is a big part of your job, whether you practice it or not.Here are some quick tips:

1) Be intentional

You have to keep communication in mind and be thinking all the time, "Who needs to know this?" You also have to be thinking "What do I need to know?" and "Whom should I be speaking with today?"

2) Do not confuse e-mail and meetings with communication

These can be vehicles for communication, but they have flaws. E-mail fails to convey the tone of our communication and meetings can drift into discussions of many topics. Just pick up the phone or walk down the hall if you really want to touch base with someone.

3) Practice

Yes, practice how you say things. Learn the words that carry meaning and power. Learn how to control your body language and read the body language of others. Do you have a big presentation in your future? Do you have an important conversation with someone soon? Practice!

4) Read before sending

So you've written an e-mail, status report or some other piece of written communication. Take an extra 60 seconds and read back through it. Place yourself in the role of the people who will receive it. How would be feel if you were the recipient? Are there any obvious gaps, typos, or other mistakes? Testers get nailed on this because we are highlighting others' mistakes. People aren't perfect, but try to find mistakes the best you can.

A really good book on this subject is Naomi Karten's "Communication Gaps and How to Close Them".

I hope I have communicated my thoughts on this well! Your thoughts?

Have a great day.

Randy

Thursday, April 08, 2010

Being a Linchpin in a Process-Oriented Environment

In Linchpin, Seth Godin writes about becoming indispensable at our jobs by becoming more unique. We become more unique by letting the artist in us shine forth in what we do. Godin admits there are times you would not want this, like for airline pilots, etc. However, that specific example caused me to think about Captain Sullenberger who skillfully guided the U.S. Airways jet to a safe landing in the Hudson River. That was artistry. My friend Frank pointed out this was not because of his training from the airline, but because he knew how to fly gliders. Interesting.

In a factory setting, you want people making the same things in the same way. Dr. Deming taught people to remove variations in the process. He taught that processes should be so well defined that anyone with the right training could perform them with identical results. We can apply that in some ways to software development. So, how does one let their artistry shine in that kind of process-oriented environment?

Frank observed that Quality Circles was a technique Dr. Deming taught to let the workers in a process have a voice in improving it. Those people who are good at seeing problems and suggesting changes to the process that save the company money are linchpins and artists. You would not want people to start changing the process whenever or however they want, but you do want to know how to improve the process at the right time, in the right way.

Certainly Dr. Deming valued the person and how they contribute to quality. However, he was not a proponent of surviving by heroic efforts. The problem of heroic efforts is that is rewards those who do sloppy work and then swoop into to fix it, while those who consistently do good work get little recognition.

Unfortunately, too many good people suggest good ideas to management and those ideas are ignored. These people often leave for companies that do value their ideas. They can do that and get more money in the process because they add value. They are linchpins. This applies to leaders as well. Leaders who listen to ideas will rise and succeed, while those who don't will continue to struggle.

Your thoughts?

Randy

Friday, April 02, 2010

Book Review - Linchpin by Seth Godin

For a long time I have been telling software testers there is someone out there, either in their city or halfway around the world, that can test faster and cheaper than they can. Like it or not, software testing has become a commodity for many organizations. It doesn’t matter who is doing the testing, how they are doing it, only that testing is being done at a cost they are willing to pay.

These are the same companies who call me up to get “two days of training on testing” then have no idea what their needs are, what will happened after the class, or even who will be the instructor. They just want “a pound of training” as I call it. This type of attitude means you need a point of distinction. That’s what this book is all about – how to become indispensable by letting the artist in you emerge.

Think of the companies that lay off hundreds or thousands of people all at once. How were those people chosen? They didn’t lay off everyone, so who got to stay and why? It wasn’t the people who obediently played by the rules and didn’t make waves. The ones who stayed were difference makers – linchpins.

This book takes you on a journey. It starts with some background of how we got here. After all, for all of human history except the last 60 years or so, people lived without the idea of being taken care of by a company. Now, we find ourselves in a different ballgame with a whole new set of rules. Having a good job is no longer the definition of success. Lots of “good jobs” have gone away, never to return.

The journey continues to discuss how to become that person that the company values so highly, they will do anything to keep you – the linchpin upon which there success depends.

Of course, becoming and staying a linchpin isn’t easy. There is a lot of resistance to stay in your comfort zone. Godin goes into detail about the choice required to become a linchpin and why it’s the choice between being remarkable and being a cheap drone.

The brilliant thing about this book is that Seth goes into depth about “the resistance”, the thing that keeps us from being unique and taking the risk to let our special gifts shine. It is so easy to want to blend in and go with the flow, but Seth makes a compelling case that this is the path toward extinction.

After reading the book and thinking of my experiences in consulting in many companies, I realize that the only difference between many companies and a chicken slaughterhouse is the lack of chickens. Every day people dutifully report for duty, keep their heads down, do what they are told to do (or not to do), then go home and do it all again the next day. The work is mindless, requires no creativity and at the end of the day, people leave unfulfilled and unappreciated – and a bit bloody.

At times I felt conflicted as I read Linchpin. I identify with being the artist. I tend to procrastinate, so the idea of setting a ship date for something and then shipping no matter what has actually helped to get some things done. On the other hand, I have seen so many companies suffer from the deadline mentality I can’t totally embrace that idea.

I think there is balance in the decision to ship or not to ship. In some cases, shipping bad stuff on the deadline can be very bad. In other cases, it can be the first faltering steps toward a great eventual product. I think it all depends on how understanding people are. For things like cars, airplanes and pacemakers, it’s a bad idea to ship just based on the deadline being reached.

The other point I have difficulty with is the idea of not being attached to your worldview. I agree that we need to be able to see and understand other people’s worldviews. My point of departure comes in being so unattached that you have no stable set of beliefs. I think it’s important to know why you hold your worldview and even be willing to question it. I also think it’s fine to be passionate about your beliefs. The key is whether or not your beliefs are based in truth. I know, I know, this opens up a great philosophical discussion about “what is truth?” I just think you can be a linchpin and still be true to your beliefs.

Finally, perhaps the most troubling realization of all is the contradiction of on my closely held beliefs in the idea of systematizing of processes. I really think Dr. Deming had it right about defining a process so that anyone could perform it without error. This is a good thing for factories, hospitals and fast food places that rely on consistency of results. However, when it comes to intellectual work, we need the creativity of the artist.

This leads me to the application of this idea to software testing. On one hand, we need high levels of accuracy and repeatability for some types of testing. On the other hand, we need the creativity of the artist for discovering new defects and ways of performing testing.

This is a great book, an essential book, for anyone in today’s marketplace. Both young and not-so-young will be better prepared to deal with the world by reading Linchpin. This is an easy read and I especially like the way Seth fleshes out his ideas in a stream of coherent small sections of writing. This alone has changed how I plan to write my future books - many of which now have publication dates! Thanks, Seth, for another insightful and timely book.

I will be continuing these thoughts here on my blog in the coming days, so come on and join the discussion!